Step into the world of artificial intelligence for a moment. Picture this: you type a question into ChatGPT or Gemini, and before you can even sip your coffee, boom an articulate, context-rich response appears on your screen. But have you ever paused to wonder ,how on earth does that happen? What’s swirling behind that digital curtain to make AI talk (almost) like us?

It’s the intersection of massive data, neural storytelling, and the genius of human innovation. Today, we’re going behind the scenes to unpack how Large Language Models (LLMs) generate their answers and trust me, by the end, you’ll see AI in an entirely new light.

Pattern Over Memory: The Real Secret Behind LLM Intelligence

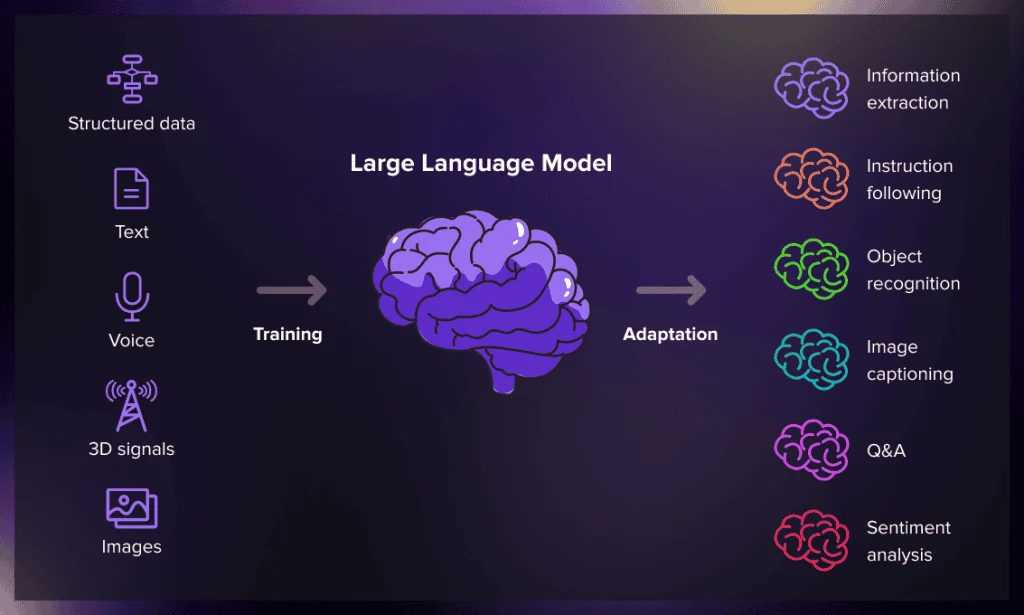

Let’s start simple. An LLM or Large Language Model is essentially a piece of advanced software trained on massive amounts of text: books, journals, web pages, conversations, code repositories, and more. But rather than memorizing information like a parrot, LLMs learn patterns buried deep within language itself.

They study how words connect, how ideas are framed, and even how people express emotions or opinions. Think of training an LLM like teaching a student who has read almost every article on the internet ,but instead of remembering specific sentences, they remember how those sentences work together.

Every time you prompt an LLM, it doesn’t “look up” the answer. Instead, it predicts what words or ideas should come next based on everything it has learned. This predictive magic lies at the heart of how responses feel coherent, insightful, and surprisingly human.

The Secret Recipe: Tokens, Context, and Probability

Imagine you’re finishing someone’s sentence: “The sky is…” There’s a high chance you’ll say “blue.” That’s a prediction. LLMs do this ,but at a scale you can barely imagine.

They don’t see text as words; they see it as tokens ,tiny fragments that represent everything from letters and syllables to entire words. When you prompt an AI with “Explain how photosynthesis works,” it breaks this down into tokens and calculates which token (or word) should come next in the response by assessing probability patterns.

The computation isn’t random; it’s statistical artistry. The model looks at billions of signals it has absorbed from training data and predicts the next token with astonishing accuracy. What you get back is not rote repetition ,it’s an original reconstruction of meaning derived from probability and learned association.

The Neural Powerhouse: Where AI Gains Its Intuition

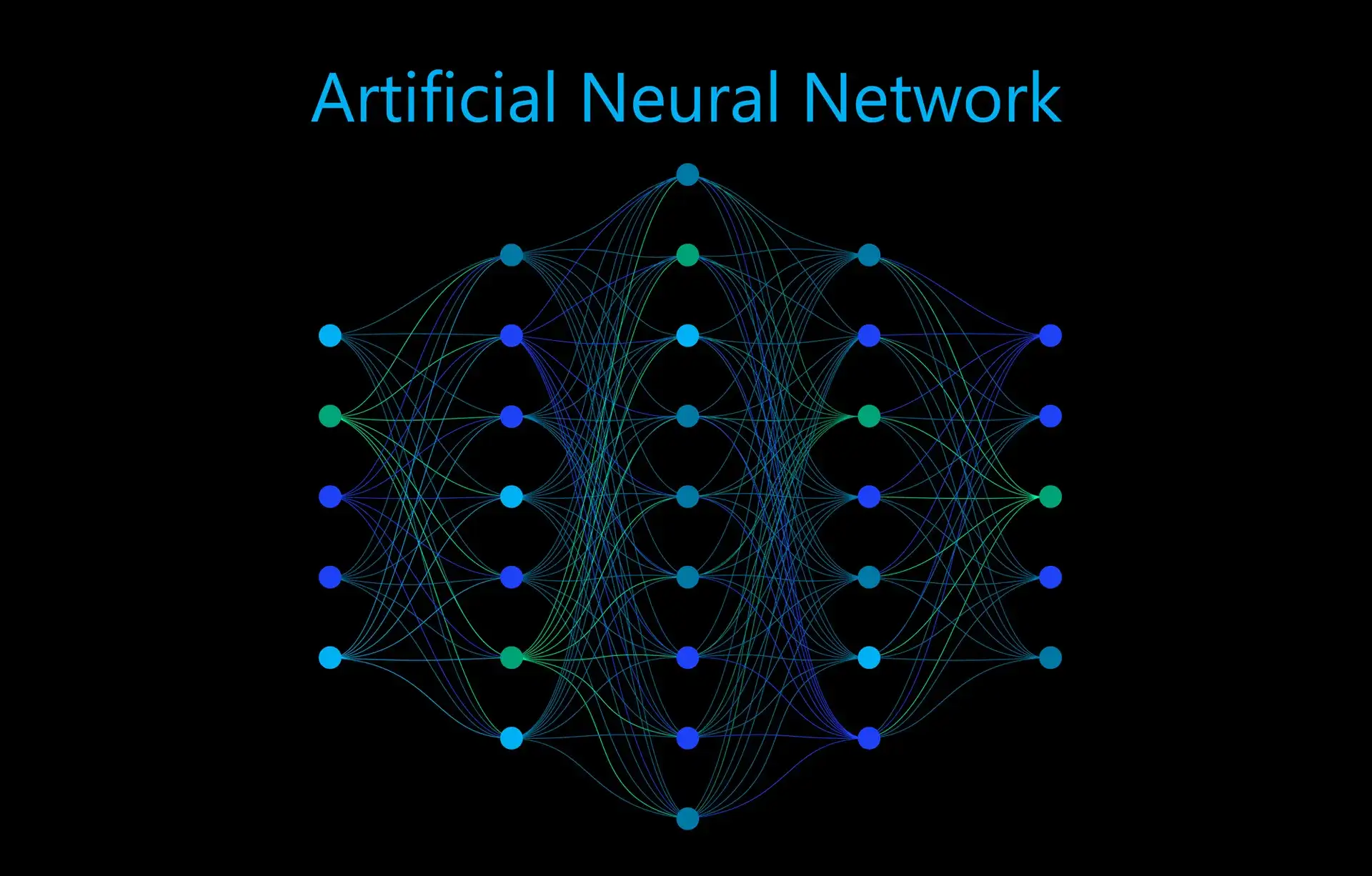

You’ve likely heard the term “transformer model.” This isn’t sci-fi—it’s the architectural backbone of today’s most powerful LLMs.

Transformers are designed to understand context across small and large spans of text. Unlike older AI models that could only process information sequentially, transformers can analyze all the words in a sentence simultaneously and identify how each relates to the others. This “attention mechanism” allows the model to weigh which words matter most to the meaning of the entire message.

For example, in the sentence, “The CEO told the manager that she would approve the deal,” the model uses context to figure out who “she” refers to. It’s this ability to grasp nuance and relationship that makes transformer-based AI feel intuitive and natural.

Training on the Internet: A Double-Edged Sword

Training large language models demands staggering amounts of data and computational power. It’s like feeding a superbrain to everything that’s ever been written and then teaching it to develop good judgment.

The data comes from books, open forums, articles, research papers, even portions of code. While this gives the model incredible versatility, it also introduces challenges, bias, outdated information, and factual inaccuracy. Modern LLM developers invest enormous effort in fine-tuning the models with curated datasets, supervised feedback, and reinforcement learning to make their answers reliable and ethical.

When OpenAI, Google, or Anthropic train new models, they often run multiple phases of “alignment”, a process where human experts grade responses and teach the model what’s appropriate, accurate, and valuable. So yes, there’s a very human touch guiding how AI learns to talk.

Behind Every Response: Computation in Action

Let’s visualize what happens when you ask a question like, “What’s the future of energy?”

First, your words get converted into tokens. The model reads those tokens, processes them through millions (sometimes billions) of parameters ,tiny adjustable “knobs” inside its neural network that represent learned language features. Then, it predicts probable responses for the next few tokens, refining them word by word until the response feels complete.

What’s happening is a symphony of matrix multiplications ,a math orchestra playing at lightning speed across far-flung data centers. Each layer of the neural network refines meaning, tone, and relevance, ensuring what you see feels cohesive and thoughtful.

And because the model doesn’t “think” linearly the way humans do, it can generate connections we might not immediately make ,combining insights from multiple disciplines almost effortlessly.

Why LLMs Feel So Human

The magic of conversation lies in empathy and rhythm. Surprisingly, LLMs have learned these, but not through emotion, rather through exposure.

When trained on billions of conversational snippets, they learn the ebb and flow of human dialogue: the pauses, affirmations, and enthusiastic tones that make text feel alive. For instance, when a user says, “I feel stuck on my startup idea,” the model doesn’t just respond with logic, it often offers encouragement, mirroring motivational speech it has learned from realistic human interactions.

This emotional resonance isn’t conscious, but it’s what makes AI collaboration feel natural. Business leaders, content creators, and educators find this quality invaluable when integrating AI writing or support tools into their workflows.

Business Meets the Machine: Real-World LLM Impact

Now that you’ve glimpsed the mechanics, let’s talk about value. How are these models transforming business, creativity, and innovation?

Think of industries like customer experience, marketing automation, or data analytics. LLMs are powering chatbots that can engage customers 24/7 with empathy and precision. They’re rewriting blog drafts, analyzing SEO data, generating code snippets, and even summarizing complex research all in record time.

For professionals juggling a thousand tasks a day, AI is not just an assistant it’s a strategic amplifier. What used to take hours of brainstorming, drafting, or revising can now be achieved in minutes, giving teams more bandwidth to innovate, ideate, and grow.

Tools like Jasper.ai, DeepSeek AI, and Fireflies.ai are reshaping productivity. AI meeting assistants summarize discussions. Copy generators craft ad campaigns. Developers use LLM-based copilots to debug and optimize in real-time. Behind every one of these capabilities lies the same core principle: tokenized prediction powered by human-aligned design.

Brains and Imagination: The Dual Strength of LLMs

Here’s a fun paradox: LLMs are at their best when they balance precision and imagination. Ask them to write product descriptions, and they’ll deliver polished copies. Ask them for fresh creative ideas, and they’ll compose something thought-provoking. But ask them for hard facts or breaking news, and they might falter.

Why? Because LLMs don’t “know” in real-time. They rely on what they were trained on, not a live feed of global updates. That’s why combining LLMs with connected data tools like APIs, real-time databases, and search systems creates even more powerful results.

So, when businesses leverage AI automation, the secret ingredient is human oversight. AI drafts; humans direct. It’s this collaboration that creates truly meaningful innovation.

Beyond Text Generation: The Rise of Reasoning AI

We’re only scratching the surface. The next generation of LLMs isn’t just about generating text ,it’s about understanding context, reasoning across sources, and making logical connections. These emerging models, often called “LLM 2.0” or “reasoning engines,” combine memory, symbolic logic, and multi-step reasoning capabilities to deliver richer answers.

Imagine asking your AI assistant: “Summarize the top five trends in AI ethics from the last six months.” Soon, instead of giving static summaries, it could scan verified publications, weigh perspectives, and deliver concise, referenced insights—just like a research analyst.

That’s the future enterprise leaders are preparing for. A world where language models think more like partners than tools.

Where Data Meets Imagination: The Promise of AI’s Future

As we pull the curtain back on LLMs, one thing becomes clear: they’re redefining not just how machines talk, but how humans work, think, and create. The hidden math, the tokens, the training, all of it converges into one simple magic trick: turning data into dialogue.

So next time an AI assistant answers your question or helps you write a proposal, take a second to appreciate the engineering feat behind that friendly tone. Millions of parameters, terabytes of data, and decades of research make that seamless exchange possible.

The future of human-AI collaboration isn’t cold or mechanical, it’s vibrant, creative, and deeply transformative.

And if you’re a business leader wondering how to harness it, the best time to experiment is now. Adopt AI tools. Integrate them into your workflows. Let them handle the heavy lifting while you focus on creativity and strategy.

Ready to unlock the true potential of AI for your business?