Imagine you run a mid-sized logistics company in Amsterdam. You have just integrated a shiny AI-powered tool that automatically screens job applicants to save your HR team hours of work every week. Sounds great, right? Well, under one of the strictest AI regulations in the world, that tool now falls under strict legal oversight, and if you are not prepared, you could be looking at fines of up to 35 million euros or 7% of your global annual turnover, whichever is higher.

Welcome to the era of the EU AI Act. Now in force, the EU Artificial Intelligence Act is the world’s first comprehensive legal framework specifically designed to regulate artificial intelligence. For Dutch businesses, whether you are a tech startup in Amsterdam, a retailer in Eindhoven, or a healthcare provider in Utrecht, this regulation is not something you can afford to ignore.

What Exactly Is the EU AI Act?

Think of the AI Act as the GDPR’s ambitious cousin. Just as the General Data Protection Regulation (GDPR) reshaped how businesses handle personal data, the AI Act is set to fundamentally change how companies develop, deploy, and use artificial intelligence across the European Union.

The regulation applies to any organization that places AI systems on the EU market or uses AI systems within the EU, regardless of where the company is headquartered. So yes, Dutch businesses are very much in scope, and so are international companies serving Dutch customers.

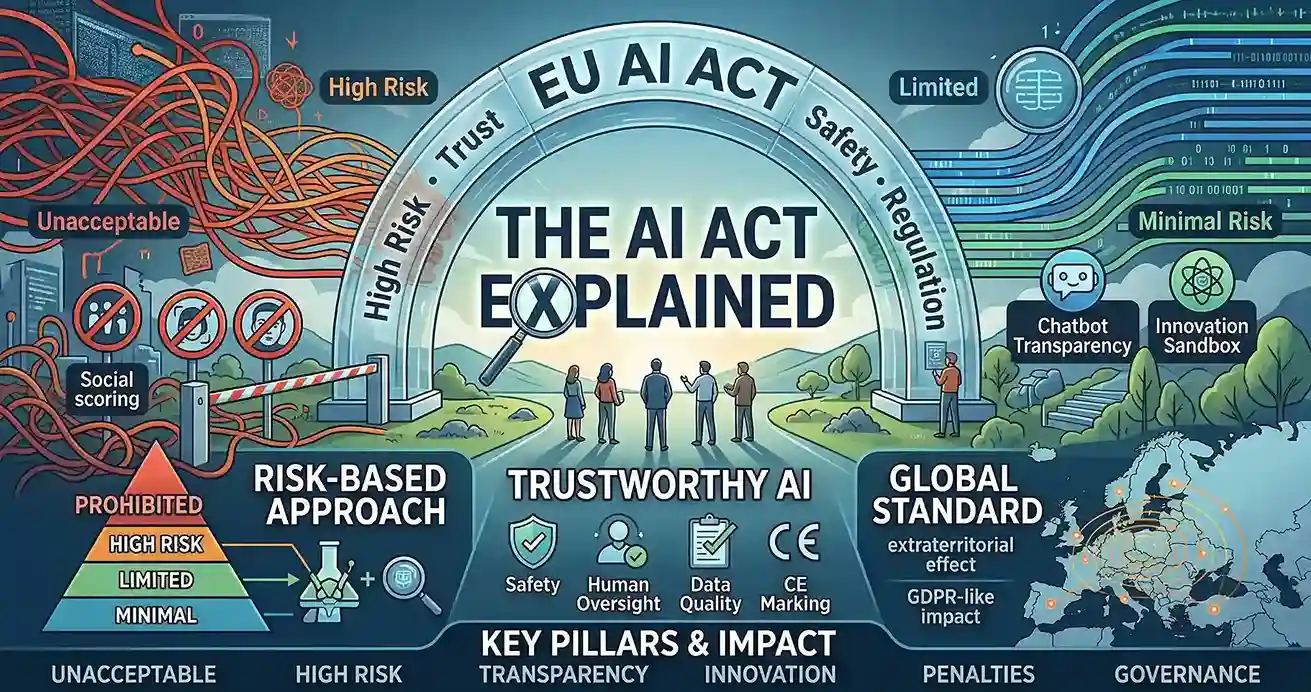

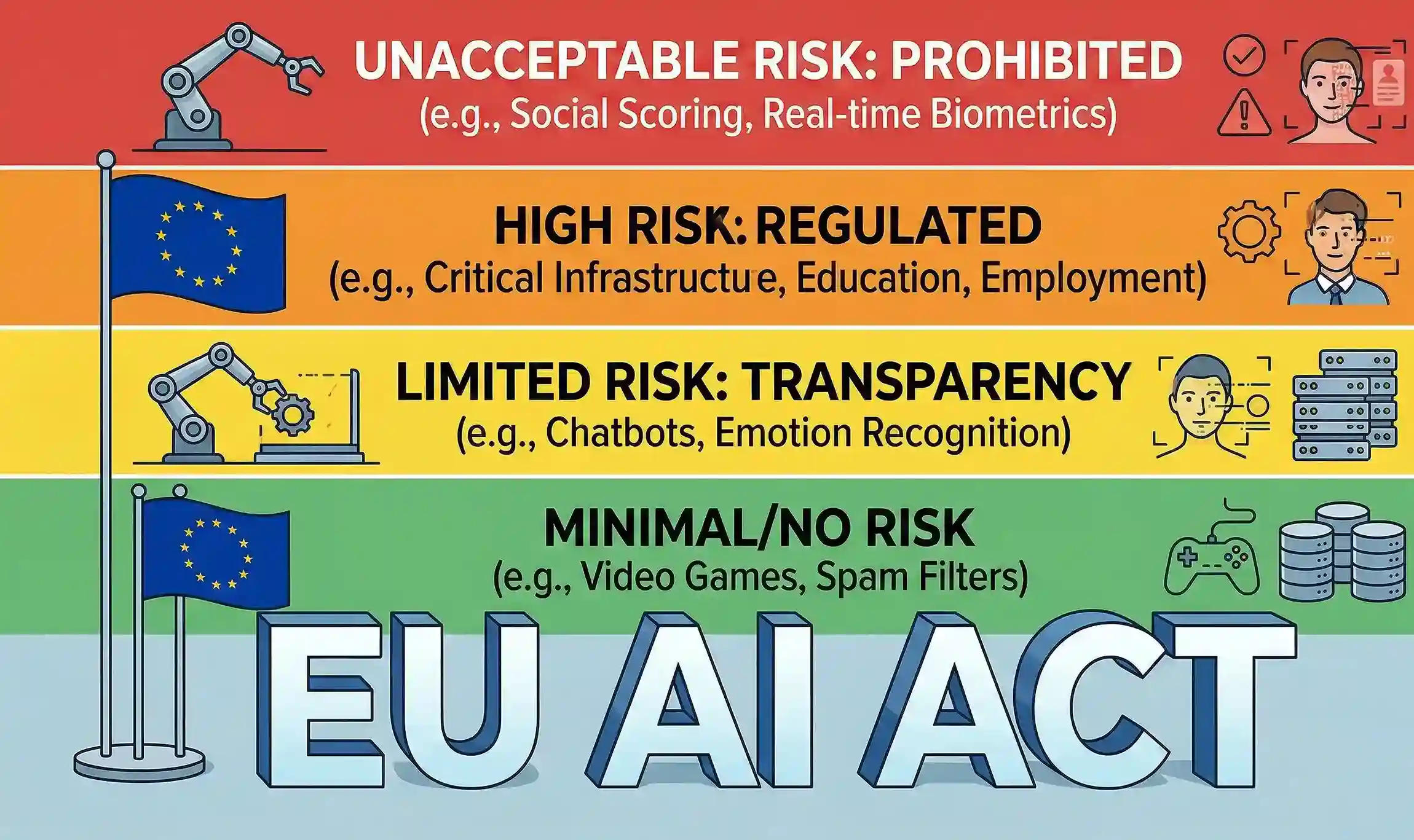

The core idea behind the Act is a risk-based approach. Not all AI is created equal. A music recommendation algorithm on a streaming platform carries very different risks compared to an AI system that decides whether someone gets a bank loan. The Act categorizes AI systems accordingly and sets rules proportional to their potential impact on people’s lives.

What Does This Mean for Dutch Businesses Specifically?

The Netherlands is one of Europe’s most digitally advanced economies. AI adoption among Dutch companies has been steadily growing, particularly in sectors like finance, manufacturing, logistics, and retail. This means a significant portion of Dutch businesses are already using AI tools that may fall under the scope of the AI Act.

Here are some concrete, real-world scenarios to help you see how the Act applies:

• A Dutch bank using an AI model to assess loan applications must ensure the model is transparent, auditable, and does not discriminate. Human oversight mechanisms must be in place.

• A hospital in Amsterdam using AI to analyze medical scans must comply with strict data quality and documentation requirements, and ensure that medical professionals can always override the AI’s conclusions.

• A Dutch HR tech company selling AI recruitment tools to other businesses must register their product in the EU’s AI database and conduct conformity assessments before placing it on the market.

• A retail chain using AI-powered dynamic pricing needs to check whether it falls under the transparency obligations and ensure customers are not misled.

Key Obligations for High-Risk AI Providers and Deployers

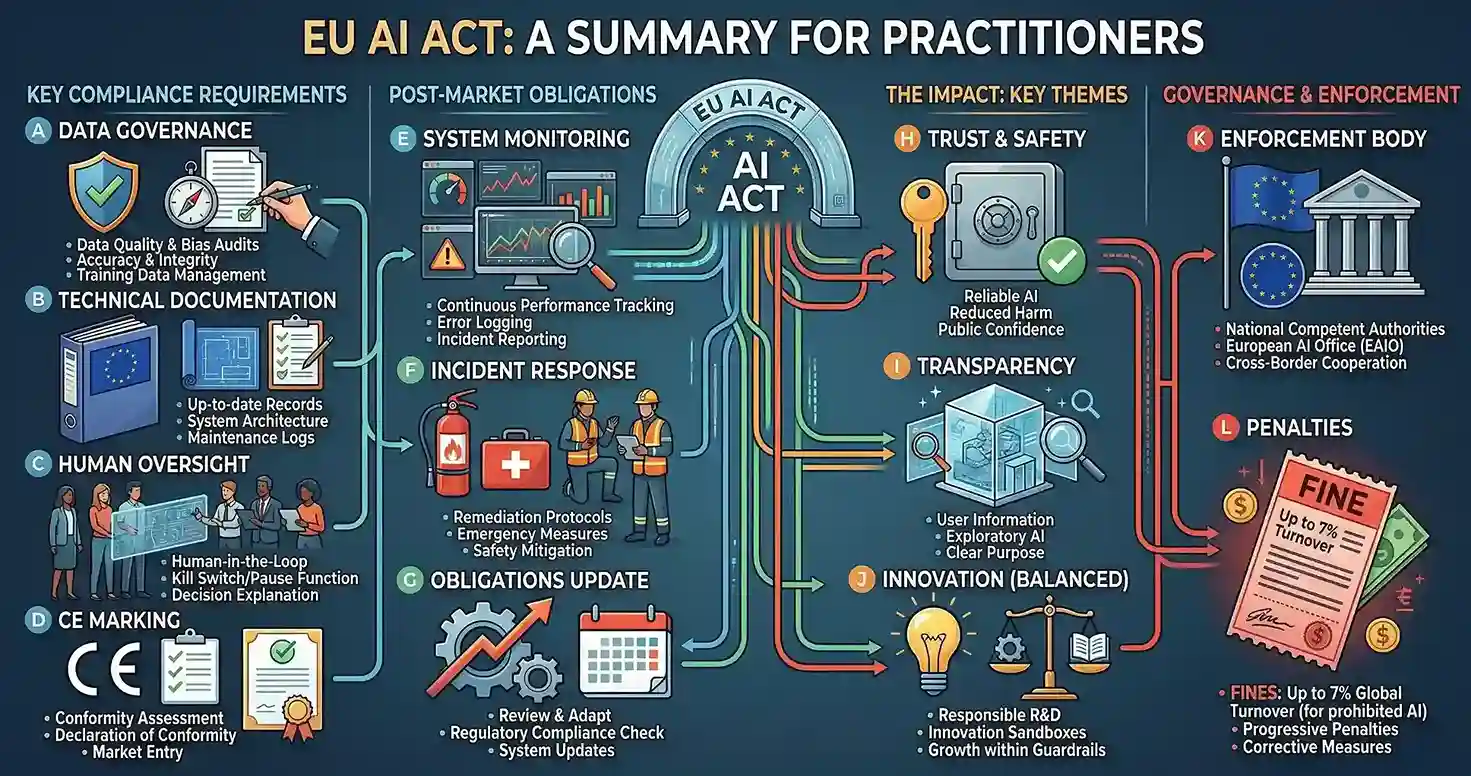

The AI Act draws a distinction between two main groups: providers (companies that develop or supply AI systems) and deployers (companies that use AI systems in their operations). Both have obligations, but providers carry the heavier regulatory load.

If your business is a provider of high-risk AI, you need to conduct a conformity assessment to demonstrate your system meets the Act’s requirements, maintain detailed technical documentation, register your system in a publicly accessible EU database, implement robust data governance and bias testing, and put a quality management system in place.

If your business is a deployer of high-risk AI (meaning you use a tool built by someone else), you need to ensure you use the system according to the provider’s instructions, conduct a fundamental rights impact assessment in certain cases, maintain logs of the system’s operation, and ensure meaningful human oversight at all times.

Think of it this way: the provider is the car manufacturer, and the deployer is the driver. The manufacturer must ensure the car is roadworthy and safe. The driver must follow the rules of the road and not use the car recklessly. Both are accountable, just in different ways.

The Timeline: When Do the Rules Kick In?

One of the most important things to understand about the AI Act is that it is being implemented in phases. You do not have to comply with everything overnight, but you do need to start planning now.

• Phase 1 (already in effect): The Act is now in force. Prohibitions on unacceptable-risk AI systems are already being enforced. If you use any banned AI applications, the time to act is now.

• Phase 2 (coming soon): Rules for general-purpose AI (GPAI) models, including transparency requirements for developers of large AI models, will apply. Dutch tech companies building on top of AI models need to start preparing.

• Phase 3 (within two years of the Act entering into force): Full obligations for high-risk AI systems kick in, including conformity assessments and registration requirements. This is the big deadline that most Dutch businesses need to plan around.

• Phase 4 (within three years of the Act entering into force): High-risk AI systems in regulated product sectors such as medical devices must also comply. Healthcare providers and medtech companies, take note.

The window to prepare is open, but it is not unlimited. Considering the scale of documentation, risk assessments, and internal process changes required, businesses should ideally begin their compliance journey right now.

General-Purpose AI: A Special Mention for Dutch Tech Companies

With tools like ChatGPT and similar large language models becoming deeply embedded in how businesses operate, the AI Act introduces specific rules for general-purpose AI (GPAI) models. These are AI systems that can be used for a wide range of tasks, not just one specific purpose.

Providers of GPAI models must provide technical documentation and comply with EU copyright law. For GPAI models considered to pose systemic risk (generally, those trained on very large computational resources), additional obligations apply, including adversarial testing, incident reporting, and cybersecurity measures.

For Dutch tech companies building products on top of GPAI models, it is important to understand that liability can flow both upstream and downstream. Understanding who is responsible for what in your AI supply chain is absolutely critical.

Who Will Enforce the AI Act in the Netherlands?

Each EU member state is required to designate a national competent authority to oversee AI Act compliance. In the Netherlands, the Dutch Data Protection Authority (Autoriteit Persoonsgegevens) is expected to play a key role given its existing mandate around data and digital rights. The Netherlands is also actively participating in the EU AI Office, the body established at the European Commission level to coordinate AI governance across member states.

Penalties for non-compliance are serious. Violations involving prohibited AI systems can result in fines up to 35 million euros or 7% of global annual turnover. Violations of other obligations can result in fines up to 15 million euros or 3% of global annual turnover. Providing incorrect information to authorities can lead to fines up to 7.5 million euros or 1.5% of global annual turnover.

How to Start Your AI Act Compliance Journey: 5 Practical Steps

Feeling a bit overwhelmed? That is completely normal. Here is a simple, actionable roadmap to get your Dutch business moving in the right direction:

• Take stock of your AI. Conduct an internal audit of all AI systems your business uses or provides. Yes, this includes third-party tools and vendor-supplied software. You need a clear picture of your AI landscape before you can assess your risk exposure.

• Classify your risk. Map each AI system to the appropriate risk category. If you are unsure, seek legal counsel or consult the European Commission’s published guidance documents. The stakes are too high to guess.

• Understand your role. Are you a provider, a deployer, or both? Your obligations differ significantly depending on your role in the AI value chain.

• Invest in documentation and governance. Start building the internal processes, documentation frameworks, and human oversight mechanisms that the Act requires. This is not a one-and-done task; it requires ongoing commitment.

• Train your team. The AI Act is not just a legal department issue. Your data scientists, product managers, HR teams, and customer service leads all need to understand how the Act affects their work. Make AI literacy a business priority.

The Opportunity Hidden in the Regulation

Here is a perspective worth holding onto: regulation is not just a compliance burden. For Dutch businesses willing to embrace it proactively, the AI Act is a genuine competitive advantage.

Consumers and business partners increasingly care about trustworthy AI. Companies that can demonstrate robust AI governance, transparency, and ethical design will stand out. In industries like healthcare, finance, and HR, where trust is everything, being able to say your AI is fully compliant with EU law is a powerful differentiator.

The Netherlands has always punched above its weight in innovation. Dutch companies that get ahead of AI compliance now will be better positioned to expand across Europe, attract international partnerships, and build the kind of long-term customer trust that no marketing campaign can buy.

From Innovation to Accountability: Navigating the EU AI Act

The EU AI Act is arguably the most significant piece of technology legislation since the GDPR. For Dutch businesses, it signals a new era where AI is not just a tool for efficiency, but a domain of responsibility.

The key takeaways are clear: know what AI systems you are using, understand your risk category, identify whether you are a provider or deployer, start building your compliance processes now, and train your people. The phased timeline gives you room to act, but not to procrastinate.

The good news is that the Dutch business community is no stranger to adapting to regulation. You navigated GDPR, you can navigate this too, and the businesses that do so with enthusiasm rather than reluctance will come out ahead.

Ready to take the next step? Start with an internal AI audit this week. Map out every AI tool your business touches, document it, and flag any that could be high-risk.

The AI Act is not a threat to innovation. It is an invitation to build AI that people can actually trust. And that, for forward-thinking Dutch businesses, is the greatest opportunity of the decade.